AI video tools have reached an impressive technical milestone. The generated footage looks increasingly professional—sometimes stunningly so. Yet for creators attempting to use these tools for actual projects, a fundamental frustration persists.

You craft the perfect prompt. The system produces exactly what you envisioned. Then you attempt to generate a follow-up clip featuring the same character, product, or setting, and suddenly everything shifts. The protagonist’s appearance changes subtly but noticeably. Brand colors drift. Visual style becomes inconsistent across what should be a cohesive series.

This consistency challenge represents more than a minor inconvenience. For marketers managing campaigns, educators building course content, or creators developing serialized work, visual incoherence makes AI video generation practically unusable despite its speed advantages. The technology works, yet the workflow fails.

Pollo AI has gained attention in creator communities specifically for addressing this pain point. After three weeks of intensive testing across multiple real-world projects, here’s what actually works, what remains limited, and whether this platform deserves integration into your creative workflow.

Why Most AI Video Tools Struggle with Consistency?

Understanding the technical limitation helps explain why this problem persists across most platforms.

Standard AI video generation relies on diffusion models trained to produce plausible individual frames based on text descriptions. When you input “woman walking through coffee shop,” the system generates each frame somewhat independently, loosely guided by your prompt. Frame 23 might interpret visual elements slightly differently than frame 47. Hair color shifts. Clothing details vary. The environment’s spatial relationships rearrange themselves.

Individually, each frame appears acceptable. Sequentially, the result feels dreamlike in the worst sense—nothing remains stable or predictable.

Traditional production solves this through physical continuity: the same actor, wardrobe, location, and lighting across every shot. AI generation lacks this physical anchor. Most platforms address the symptom through post-processing fixes or by limiting clip length, rather than solving the root cause: giving creators mechanisms to establish and maintain visual references across multiple generations.

What This Platform Does Differently?

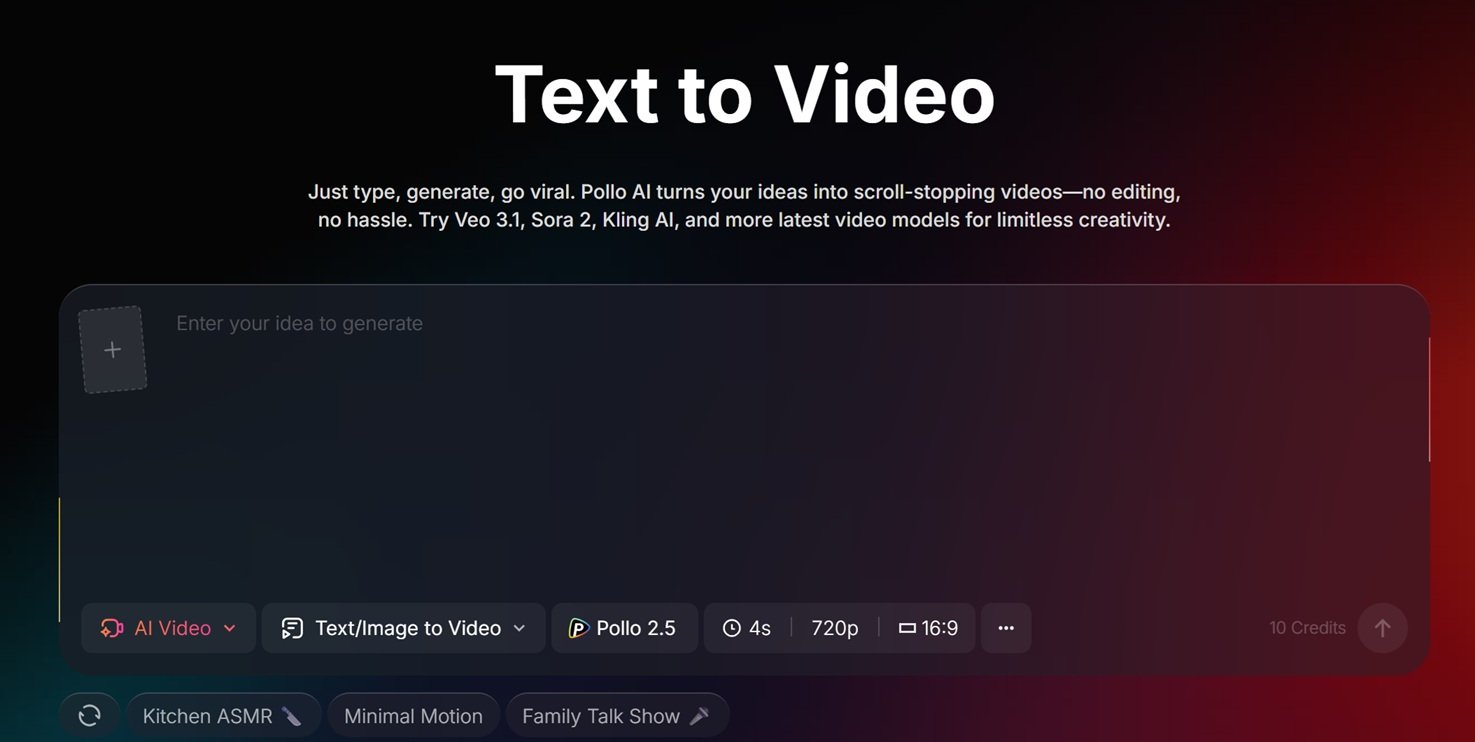

The core innovation here is reference-based generation. Rather than treating each video as an isolated interpretation of a text prompt, the system allows you to upload images, previous clips, or style references that guide subsequent outputs.

This transforms the creative dynamic significantly. You can generate a character or visual style you appreciate, save that reference, then produce new scenes featuring consistent elements from different angles, in different settings, performing different actions. The visual anchor maintains stability that pure text prompts cannot achieve.

The text to video workflow follows a structured approach: establishing references first, generating with those constraints applied, then refining through iterative adjustment. You’re not merely describing desired outcomes; you’re providing visual benchmarks and requesting the system work within those established parameters.

For specific professional applications, this capability proves particularly valuable. Product marketers can generate multiple lifestyle scenarios featuring identical item representation without photography reshoots. Character-driven content creators can maintain protagonist appearance across episode arcs. Brand teams can enforce color palettes and visual aesthetics without manual correction overhead.

The style transfer functionality extends this utility—applying aesthetic qualities from one reference to new content. This supports matching generated footage to existing brand assets or maintaining cohesive seasonal campaign appearances.

Real-World Testing: Hands-On Experience

Testing proceeded across three distinct project types: a five-part educational series requiring character consistency, a product launch campaign needing multiple lifestyle scenarios, and experimental creative work exploring system limitations.

The educational series demonstrated the platform’s core strength. Establishing a reference image for the presenter character, then generating fifteen clips across different topics, yielded consistent character appearance in twelve of fifteen generations—approximately eighty percent reliability. Three required minor correction. Comparable testing on platforms lacking reference capabilities produced roughly thirty percent consistency, demanding extensive regeneration or acceptance of visual variation.

The product campaign revealed workflow advantages. Uploading product photography as references enabled lifestyle scenario generation across office, home, and outdoor settings while maintaining accurate product representation. Total production time reached six hours versus an estimated three days for traditional location photography.

Experimental work exposed current boundaries. Abstract concepts without clear visual references challenged the system. Complex physics interactions—liquid dynamics, fabric movement—sometimes broke consistency despite reference guidance. The technology performs optimally when provided concrete visual anchors rather than relying entirely on textual description.

Performance metrics proved solid if not exceptional. Generation times averaged forty-five seconds for four-second clips at competitive but not market-leading speed. Output quality at 1080p resolution satisfied professional requirements, though 4K options remain limited compared to some alternatives.

What Independent Users Report?

Platform-controlled testing provides structured data, yet independent user experiences reveal how technology performs in unpredictable real-world conditions. Community feedback patterns help validate—or challenge—controlled findings.

Analysis of discussions across Reddit, Twitter, and specialized creator forums reveals consistent themes. Users frequently cite visual consistency as the primary differentiator, confirming testing results. The reference-based workflow receives particular appreciation from marketers and brand content creators who previously struggled with AI video unpredictability.

Critiques focus on different aspects. Some users find the reference setup process adds friction compared to simpler prompt-to-video alternatives. Others note that while consistency improved, the platform occasionally sacrifices creative flexibility—struggling with dramatic style shifts or highly unusual visual concepts when strong references are applied.

Pricing discussions appear regularly. The platform positions in the mid-market range—more expensive than basic generation tools but less costly than enterprise solutions. Users generally accept this positioning when consistency matters for specific projects, though question value for one-off or purely experimental work.

Third-party validation provides additional perspective. Aggregated user experiences on Trustpilot demonstrate satisfaction patterns, with particular strength in customer support responsiveness and platform reliability. Negative reviews cluster around specific feature limitations—credit consumption, generation limits, occasional facial distortion in complex angles—rather than fundamental service failures.

The review distribution, predominantly four and five stars with specific constructive criticism, suggests authentic user experiences. Common complaints indicate actual usage patterns rather than theoretical dissatisfaction, lending credibility to positive assessments.

Feature Assessment: Strengths and Limitations

Demonstrated strengths:

- Reference-based consistency genuinely addresses the core industry challenge

- Style transfer enables practical brand alignment

- Cross-platform availability supports flexible workflows

- Export compatibility integrates with professional editing environments

- Customer support responsiveness exceeds market averages

Current limitations:

- Generation speed competitive but not leading

- 4K output options restricted compared to some competitors

- Abstract concept handling weaker than concrete reference-based work

- Credit allocation can feel restrictive for high-volume production

- Learning curve steeper than simpler alternatives

Neutral characteristics:

- Mid-market pricing with value dependent on specific use cases

- Template library adequate without being extensive

- Audio generation basic, requiring external tools for professional sound design

Who Should Actually Use This Platform?

This solution serves specific creator profiles particularly effectively:

Brand marketers managing multi-asset campaigns where visual consistency across touchpoints matters more than raw generation speed.

Serial content creators developing character-driven or product-focused series where audience recognition depends on visual stability.

Small creative teams without dedicated video production resources who need reliable output without extensive technical troubleshooting.

Workflow-focused professionals who value integration capabilities and cross-platform availability over cutting-edge experimental features.

Less optimal for:

- Experimental artists prioritizing creative unpredictability and emergent aesthetics

- Single-project creators where setup time exceeds generation benefits

- Users requiring extensive audio-visual integration within unified platforms

- High-volume producers prioritizing generation speed above consistency

Final Assessment: Value for Working Creators

This platform occupies a specific, valuable niche in the expanding AI video ecosystem. It does not compete on raw generation speed or maximum resolution. Instead, it trades those metrics for reliability—the confidence that generated content will match creator intentions consistently across multiple assets.

For professionals whose work depends on that confidence, this trade proves worthwhile. The platform transforms AI video from experimental demonstration into production tool capable of serving professional workflows. The reference-based approach adds setup complexity, but returns that investment through reduced iteration and correction time.

The technology remains imperfect. Abstract concepts challenge it. Speed and resolution lag some competitors. But for its target use case—maintaining visual coherence across generated video content—it delivers genuine value that alternatives struggle to match.

Whether that value justifies investment depends entirely on specific creative needs. If consistency matters for your projects, this platform deserves serious consideration. If you’re exploring AI video casually or prioritizing creative unpredictability, simpler alternatives might serve better.

The platform represents meaningful maturation for AI video technology—moving from impressive demonstrations toward reliable tools respecting professional requirements. That progression benefits the entire creative ecosystem, regardless of which specific solution you ultimately select.